In our SEO section, we have often spoken of the things that you should consider in the context of a structured SEO methodology for the search engine optimization of your project.

Here we made a distinction between points that have to be implemented on and outside of your website.

Backlinks as part of a higher-level SEO understanding

Let’s recall two essential design levels of an SEO-conscious web project:

- Aspects on your website: content, structure, ergonomics, usability, performance, security, data protection, mandatory information, etc.

- Aspects outside of your website: Presence in social networks, backlinks, etc.

For details, like to leave our SEO checklist again.

Backlinks are clearly outside of your website, but are not automatically outside your sphere of influence.

What are backlinks?

Of course, we understand a link to be the connection between two websites using a clickable hyperlink. You either set such links internally from one blog article to another, or you set an external link to another website.

If another website links you, then that is a reference from the web back to you – and hence the name backlink.

Why are backlinks important?

To answer the question of why you should pay attention to backlinks at all, remind yourself what a search engine like Google actually wants to achieve.

Google has no interest in ranking your site particularly well or particularly badly. The goal of a search engine is always to provide the user with a result that fits his query as well as possible. So your website has to be relevant to a certain topic.

One way of checking this relevance is to review the topics your blog is addressing. How your blog articles are linked to each other gives Google (already via the term they were linked to) information about which topic your site is concerned with.

Strong Links

But a purely thematic classification does not give Google any indication of how valuable your contributions are ultimately for a visitor.

If, however, several other pages with the same topic link to you, then Google assumes a certain authority on your page with regard to the topic with which you are dealing with the content.

Therefore backlinks are an important influencing factor for the ranking of your site and therefore important for your SEO project.

What should I consider when building backlinks?

Basically, you shouldn’t force backlinks to be built. Links from other sites are created automatically the more your content matures and thrives in terms of quality and quantity. But of course every new web project starts from scratch, and that’s why we would like to give you a few tips on how you can build a healthy backlink profile without forcing your luck.

But first, the following points should serve as a guide:

- Organic links are created without you having to do anything. Trust that other websites will link you.

- Do not exchange links. Google now recognizes very well which page owners have a certain “proximity” to one another and whether they are using the SEO strength of their own website to help others.

- Don’t take money for a link that you put on another page.

- Don’t offer money to anyone for a link that appears on their page.

- Do not write guest articles on other blogs that hardly differ in terms of content, or that have already appeared on you or other websites in the same or similar form.

- Don’t enlist your website en masse in link networks. Such backlinks are of very poor quality and, if they are too high, can even be detrimental to the Google ranking.

- Do not allow yourself to be linked to other sites via Moneykeywords such as “Earn money online” or the like. Today, Google recognizes these very well and ascribes little importance to them.

- Always make sure that your backlink profile does not become too one-sided. The anchor text of your links should consist of the brand name, then a source, a recommendation page, an article, and once again simply the URL, and here each provided with both rel = “nofollow” and rel = “follow”. This results in an organic backlink profile.

At the last point you can already see: the best backlink profile grows completely by itself and without your intervention.

How can you build targeted backlinks?

If you’re supposed to avoid all of the above, you may be asking yourself: what’s left? Well, there are quite a few things you can do without manipulating your backlinks:

- Write two to three very well-researched articles that you present to other blogs as guest articles. These blogs should have the highest possible reputation: high domain age, thematic soundness, editorial review. The rule here is: the harder it is for you to get in, the higher the chance that you will get a positive effect.

- Be active in networking with other bloggers. Whether at trade fairs, on social media or on the beach: if you prove yourself and your content skills, bloggers will either advertise your content in their own channels or even link you permanently from a real article.

- Write your own articles as deep and sound as possible. Great content will be linked, all by itself, you can count on it. One means of triggering this more strongly is to incorporate your own definitions for certain topics that only have distributed and widely differing definitions. Here you may want to be quoted as a source from other sites, if the content is in agreement with you.

With specifically desired backlinks (especially with guest articles), make sure that the link to your page is not invalidated by nofollow this time. That would make your efforts worthless.

How can I check which backlinks I have?

There are different ways for you to check whether you have backlinks and which ones they are. Essentially, three variants can be distinguished here.

Option 1: Query Google directly

A very simple, but also very imprecise way is to enter the following in Google:

link:DeineDomain.tld -site:DeineDomain.tld

You replace “YourDomain.tld” with your own domain. This is telling Google to list the links on your website and ignore your website’s internal links.

Unfortunately, you will quickly find out that these results also contain many entries that do not represent a real backlink, but are only similar in spelling to you.

Option 2: Use special backlink tools

The following tools specifically track backlinks:

- https://monitorbacklinks.com (from $ 25 monthly)

- https://de.semrush.com (from 99,95 $ monthly)

- https://ahrefs.com (from 99 $ monthly)

- https://www.sistrix.de (from 100 € monthly)

- https://www.xovi.de (from 99 € monthly)

- and many more, with similar prices

These tools are usually part of large SEO suites for professional agencies or providers with domains such as ebay.de and others. You will rarely need these tools, but they are a more accurate way of tracking your backlinks than Google itself.

Option 3: Use the SERPBOT backlink checker

Our own tool offers a very inexpensive alternative to the professional tools mentioned above, without sacrificing functionality.

The SERPBOT Backlink Checker is part of our regular Pro subscription with EUR 17.00 per month.

What if I don’t want a specific backlink from a page?

That is a very important question. Because you can actually experience disadvantages in the ranking through spam and other sites with a negative web presence as soon as they link you. But for this there is this the Google disavow tool. You can use it to deny Google certain pages that you have found in a backlink tool. They no longer have a negative effect on your ranking.

But be careful: if you are not sure, you should not invalidate a link. Because you can also harm yourself by removing a link if it comes from a website that is viewed positively by Google – even if you may not do that.

The little backlink conclusion

Links from other sites to your site are an important indicator for search engines of the relevance and authority of your site. They prove the popularity of your content on thematically related pages. You can encourage backlinks to be built by networking and posting guest articles. But first and foremost, you should publish first-class content. This will be linked in any case. Be patient.

Some backlinks can have a negative effect on your ranking. You can deny such to Google. Checking backlinks can be done using various tools. You are also welcome to use our own tool. We wish you every success and many organically grown backlinks. Stay tuned! 🙂

Every methodically implemented SEO project begins with the consideration of which thematically appropriate keywords should address your own website. However, this simple-sounding question involves all kinds of research that can be done either manually or automatically. In this post we take a look at what to do and how it can be done.

Research tasks for SEO projects

The goal of an SEO-optimized website is to get as many visitors as possible who are interested in the topic on offer. In order to meet this goal, the following questions must be answered:

- Which topic does my website specifically serve?

- Which keywords fit the topic of your own website?

- Which of these keywords are people looking for on Google each month?

- How many search queries do these keywords actually get?

- What competition (SEO competition) do the frequently searched keywords have?

- What keywords are left that have a good search versus competition ratio?

Only when these six questions have been answered systematically will you be able to enrich your own website with content that is optimized for keywords that are actually searched for by people and for which you have a chance in the top places for Google appear.

Keyword research options: by hand or tool?

Basically, by using the Google AdWords Keyword Planner and the conscientious use of Excel or Numbers tables, all six questions can be answered in such a way that a reasonable SEO result is achieved.

However, going this way by hand requires an enormous amount of time and / or money that can no longer be invested in creating unique content. In addition, Google’s own Keyword Planner deliberately no longer outputs exact values.

At the same time, however, the above questions can all be solved arithmetically and require little creativity. The necessary amount of imagination is limited to entering a seed password (i.e. an initial search term) that fits the topic of your own website.

Seed-Keyword

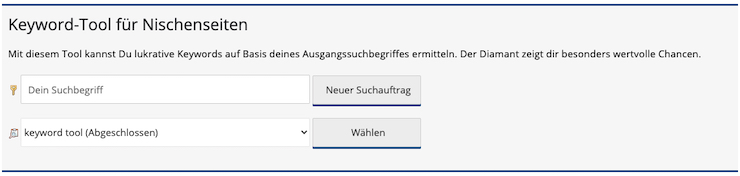

It is therefore advisable to use a keyword tool that can do the steps mentioned automatically. How exactly does that happen?

Tool-supported keyword research for opportunity and competition analysis

A keyword tool works according to the following scheme:

- Input of a seed keyword by the user, which is thematically appropriate to their own blog or website.

- Automatic identification of keywords that are often searched for by visitors in combination with this seed keyword.

- Automatic query of search volume (traffic) for this keyword.

- Automatic query of competition (competition) of this keyword on Google AdWords (the placement of advertisements for a certain keyword shows how much certain keywords are being advertised on the market)

- Automatic query of the CPC (cost per click) of each keyword on Google AdWords (this gives you a feeling of the value the competition attaches to a certain keyword – provided that advertisements are placed specifically for it)

- Automatic calculation of the quotient from search volume and competition factor

In this way, the tool automatically identifies those keywords that are (a) searched for by users and which (b) have so far received less attention from the market. Writing articles (texts) for precisely these niche keywords is ultimately a guarantee for permanent and free traffic that can flow to your own website or blog.

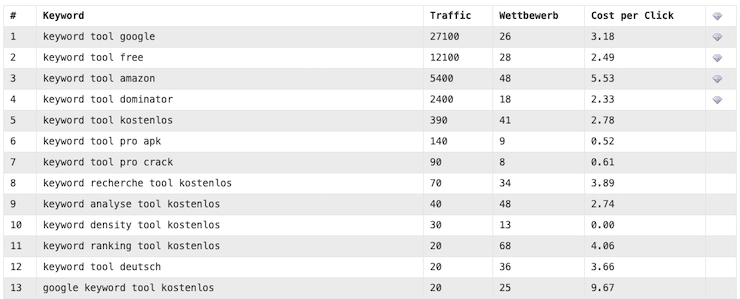

Google Keyword Tool

The conclusion about the keyword tool for Google

A systematic analysis of keywords searched for by users, their search volume and the competition behind them is necessary in order to bring traffic to your own site. This process is enormously complex and also requires access to and knowledge of Google’s own tools and how they work. In addition, Google users who do not place any active advertisements in Google AdWords no longer give out exact search volume values.

A keyword tool for Google solves these problems by automatically analyzing search terms, their search volume and their competition based on a seed keyword. Algorithms then ensure the output of specific keyword recommendations that have not yet been addressed on the market. This can be used to gain traffic from Google searches on your own website. Every blogger can concentrate specifically on what corresponds to his core: the creation of unique content on his topic.

The SERPBOT keyword tool is at your side with words and deeds. We wish you every success with your next SEO project!

You may have already wondered why an individual area or even your entire domain is falling in the ranking positions, although in your opinion you have already done everything in your power to be clean. You adhere to all common standards, your site is high-performance, secure, structured, naturally linked and provided with high-quality content. Nevertheless, the rankings on Google and co are not what you expect. The reasons are often what we want to call “black sheep” here.

What are black sheep?

There are articles that Google doesn’t like for a variety of reasons. The effect can be that this one article, the category it belongs to or the entire website is penalized. This can be expressed by a slight reduction in the rankings or by a penalty, which has a far greater impact on the rankings.

If you’ve already implemented our checklist for high quality websites, there may be an area left that is constantly on the move on Google: the authority of your website in relation to a certain topic. It might sound like you couldn’t do anything about it, but that’s not true.

Often it is a few individual articles – maybe 3 out of 76 – that Google considers sensitive.

A silent contribution

An example of such an article could be that you take up a topic relevant to health, about which you do not have sufficient knowledge from Google’s point of view. Search engines simply want to prevent this “layman’s tip” from spreading around the world – and that’s right, of course.

How do you recognize these problem child contributions?

For the problem of detecting black sheep, we have developed two features in our SERPBOT:

- A keyword filter with a graph that gives you the ranking history of the filtered keywords. That may sound trivial, but this often provides extremely interesting findings, as can be seen in the following examples.

- A special feature “Rankings per URL”, which shows you the temporal course of the mean values of your rankings for the selected subpage. With this tool you can see in a very targeted manner whether Google treats certain sub-pages disadvantageously. The rankings of all keywords that have ever received rankings for the respective subpage are taken into account when calculating and displaying the mean values.

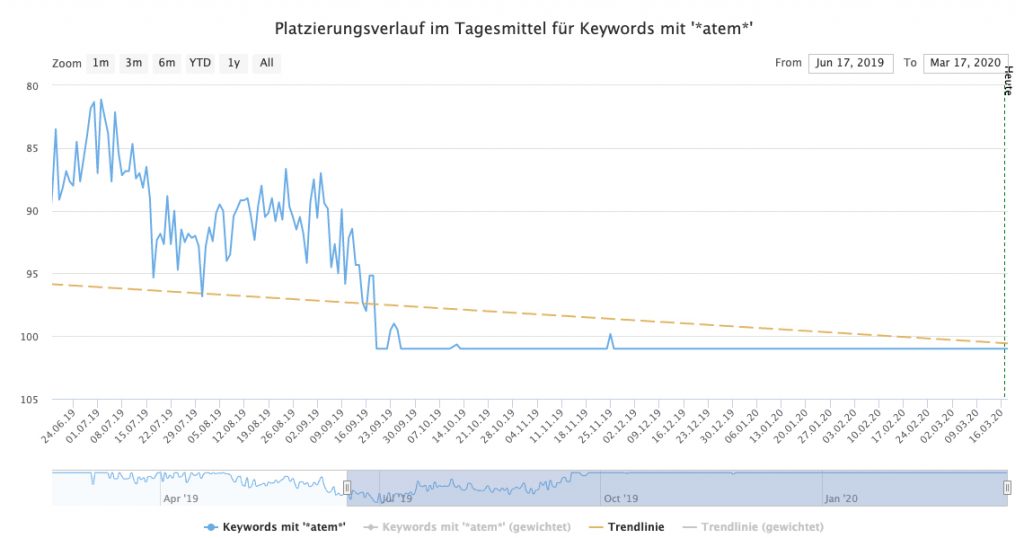

Example #1: *atem*

Here we see the progression curve of the rankings of articles that contained “* atem *” and were systematically downgraded by Google in the rankings – until they finally no longer had any rankings.

Ranking slump sample keywords to catch your breath

Let’s look at another example.

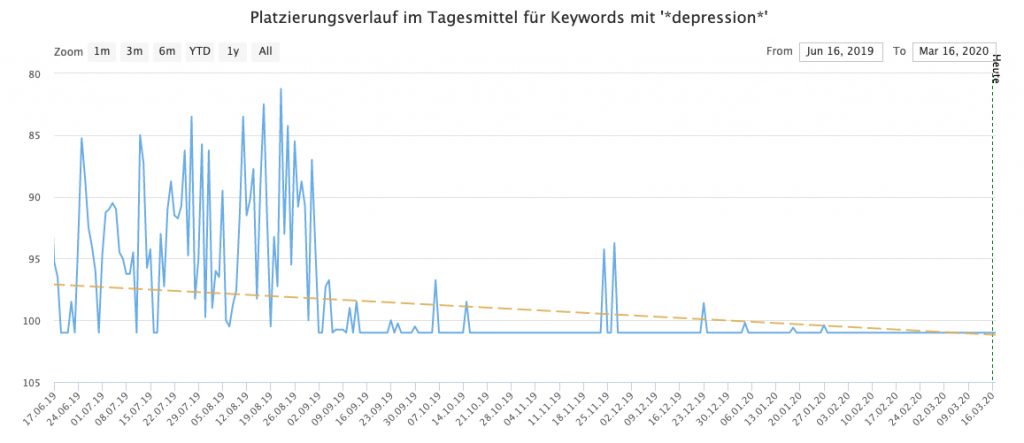

Example #2: *depression*

Here we see the ranking history of some articles that contained the word “* Depression *”. These articles also got worse and worse rankings and were finally removed from the index, so that a zero line was created.

Ranking slump example keywords related to depression

This way we can clearly see that some articles seem to be considered problematic by Google. Here, of course, the Google Medic update immediately comes to mind, which classifies health-related content as critical if the respective page is not an authority in this area. This is of course understandable, nobody wants advice that is incomplete or even harmful to health.

How can one deal with these articles?

We can assume that Google will also treat the rest of the page “neglected” if some articles fall outside the box in terms of content and / or authoritarianism. After all, Google wants to prevent these articles from being found. It doesn’t help much if the rest of the page is glossy and the other articles are left with strong rankings.

Various precautions can be taken so as not to let the rest of the page suffer from the effect of negative articles.

This includes:

- The overhaul of these black sheep: If you trust yourself, give these articles a major overhaul. This should include both the content-editorial revision and the review of external links to and from these articles. Here, of course, there is initially the risk that these optimizations will not result in any changes.

- Separating these articles on a different domain: You can set up a second website, where potentially critical articles find their shelter. From there, a possible penalty for this content no longer has a negative effect on your main domain. Avoid bidirectional links between the two domains here.

- Deleting these articles: When in doubt, the most sustainable means of course is to simply remove black sheep. Of course, this will only make sense if these articles form an exception to the content of the rest of the website, so that your site does not depend on these contributions.

What do we learn from it?

The little sheep conclusion

There are articles that Google regards as critical due to a lack of content authority on your domain. These contributions must be identified, changed, moved or removed through creative and tool-supported work.

You can then monitor your rankings again and check whether there is a change in the mean values of the rankings across your domain. In the above two examples, the affected articles were removed and the website recovered completely after about four weeks.

We wish you every success in identifying and treating your black sheep!